Who Owns AI?

What the history of electrical power teaches us about the AI race (and what it doesn’t).

“Who owns AI?”

That was the central question at India’s AI Impact Summit this week. Over four days in Delhi, heads of state, tech CEOs, and policymakers circled around it. The AI Impact Forum framed it bluntly:

“Knowledge belongs collectively to society. Yet the infrastructure controlling it is concentrated among a handful of frontier labs.”

It sounds like a new question. It’s not.

A century ago, people debated the “who owns electricity?” question.

They fought about something very concrete: who controls the grid, who sets the standards, and who gets to plug in.

History doesn’t repeat. But it rhymes. And this particular rhyme is worth listening to carefully.

The First Grid Wars

The electricity industry started as a fragmented, private affair. Thomas Edison’s Pearl Street Station in Manhattan lit up its first blocks in 1882. Within two decades, thousands of small private companies competed across the United States and Europe: each with its own voltage, its own plug design, its own pricing.

Then consolidation came fast.

By the 1920s, holding companies had swallowed most of these independents. In the US, three holding companies controlled 45% of all electricity generation by 1927. Samuel Insull’s empire alone spanned 32 states. In 1926 alone, a thousand mergers occurred. Prices were opaque. Rural communities were ignored, by the late 1920s, nearly 90% of rural American households still had no electricity. Private utilities had no commercial incentive to wire them.

Sound familiar?

A small number of players. Rapid consolidation. Opaque structures. Entire populations left outside the system. And the people inside it increasingly locked into a single provider’s ecosystem.

Then it broke.

When Insull’s highly leveraged empire collapsed during the Great Depression, it wiped out hundreds of thousands of shareholders. Private utilities, with their perceived stock manipulation and financial instability, were suddenly seen (to borrow the language of the ET summit) as “black boxes governed by opaque structures.”

A contemporary echo is hard to ignore.

OpenAI has committed $1.4 trillion in infrastructure liabilities against roughly $20 billion in annual revenue. Its partners have racked up $96 billion in debt to fund operations. HSBC estimates that even at around 200 billion dollars in annual revenue by 2030, OpenAI would still need to raise roughly 200 billion dollars more (about 207 billion) to fund its plans.

Meanwhile, Microsoft revealed that roughly 45% of its $625 billion in remaining cloud contracts is tied to OpenAI — a concentration that triggered a $440 billion market value wipeout in a single session. Oracle’s credit default swaps have hit record highs.

As Sebastian Mallaby of the Council on Foreign Relations put it: “People think we’re running an experiment about an amazing technology, but we’re also running an experiment about the depth of capital markets.”

Insull’s empire was built on leverage, vertical integration, and the assumption that growth would always outrun debt. The structural pattern (a single dominant player whose financial architecture entangles an entire ecosystem) deserves scrutiny… not blind faith!

The question is what happens if a similar correction reaches AI.

The backlash after Insull reshaped the electricity industry for a century.

The Public Utility Correction

The response reconfigured the market.

In the United States, Roosevelt’s New Deal brought the Public Utilities Holding Company Act of 1935, which broke up the holding company pyramids and forced transparency. The Rural Electrification Act of 1936 created federal loans for cooperatives to build lines where private companies refused to go. The Tennessee Valley Authority put government directly into power generation. Within two decades, US rural electrification went from 11% to 97%.

Europe went further.

France nationalized its entire electricity sector in 1946, merging roughly 1,700 private producers, transporters, and distributors into a single state-owned entity: Électricité de France (EDF).

The rationale was explicit: energy was too strategic, too essential, and too prone to private monopoly abuse to be left ungoverned. Marcel Paul, the minister who drove the nationalization, called electricity and gas “crucial public services.” The French National Assembly voted almost unanimously.

The UK nationalized its electricity industry in 1947. Italy followed a similar path. Across much of the world, the pattern held: once electricity was recognized as foundational infrastructure (not a luxury, not an app, but the grid on which economic life depends) governments intervened to ensure access, affordability, and accountability.

The logic was straightforward: when an infrastructure becomes essential to economic sovereignty, the question of who owns and governs it stops being a commercial decision and becomes a political one.

The Plug as Power

There’s another, dimension to this history that maps even more directly to AI.

Look at a world map of electrical plug types and voltages. What you see is a ghost map of empire.

British-influenced Type G plugs still dominate Singapore, Malaysia, Hong Kong, and large parts of Africa.

French plugs shaped infrastructure across West Africa and Southeast Asia. American standards spread through the Philippines and parts of Latin America.

Former colonies inherited not just parliaments and railways, but electrical standards.

And once you wire a country to a given standard (voltage, frequency, plug shape) you lock in decades of dependency on that supplier ecosystem. Equipment. Safety codes. Training curricula. Spare parts.

Nobody needed to say “Britain owns your electricity.” The standard did the work.

The design choice determined who could sell you equipment, how easy it was to switch, and what it would cost if you ever tried.

That’s technology colonialism… in cables and plastic…

AI Is Replaying the Pattern. Faster.

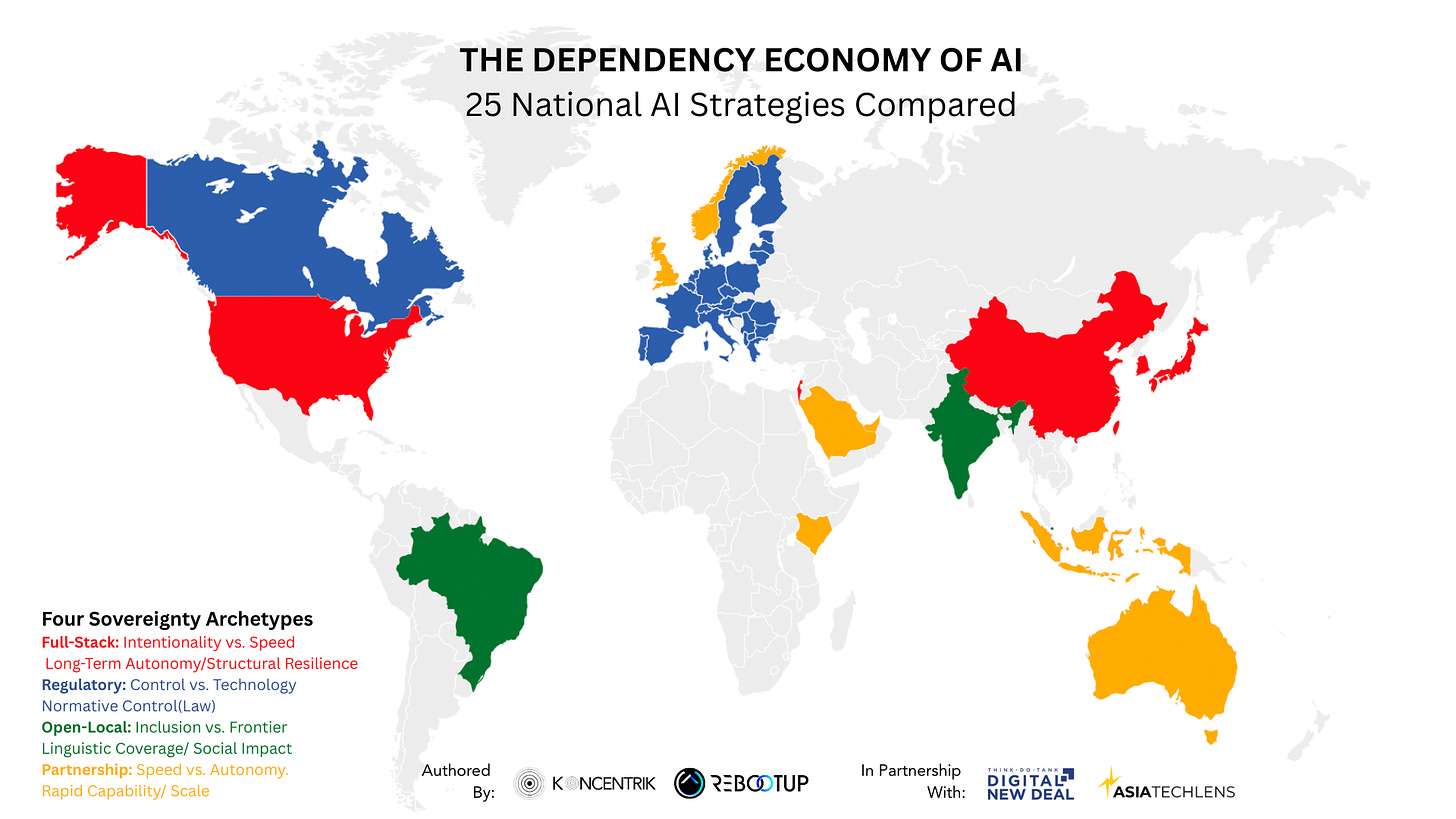

Now look at AI through the same lens: this map shows how 25 countries are approaching AI sovereignty.

A small number of companies and countries control the largest GPU clusters, the dominant clouds, and the frontier models. Their architectures and APIs are becoming default standards. Their safety benchmarks, evaluation frameworks, and governance templates are becoming default norms.

If you want to “plug into AI” as a government, bank, hospital, or manufacturer, you are increasingly nudged to use their stack, accept their risk framing, and operate under their jurisdictions.

In The Dependency Economy of AI, I mapped 25 national AI strategies and found that no country fully escapes dependency. But some manage it strategically while others simply endure it. Each optimizes one dimension of sovereignty while sacrificing another. Four archetypes emerged:

Full-Stack / Hybrid countries trade speed for long-term structural autonomy. China is the most ambitious case — investing across chips (SMIC), models (Baidu, Alibaba, DeepSeek), cloud, and data governance simultaneously. The US maintains dominance across most layers. Both accept dependence on imported raw materials and, in China’s case, key tooling like ASML lithography. This path is expensive, slow, and available only to large economies with deep industrial policy traditions.

Regulatory-First countries trade technological capability for normative control. The EU is the clearest example — the AI Act gives Brussels significant power to shape rules, but European firms remain heavily dependent on foreign hardware, foreign models, and foreign cloud infrastructure. You can regulate what you don’t build. But you can’t run what you don’t control.

Partnership-Based countries trade autonomy for speed. Saudi Arabia, the UAE, and the UK bet on deep alliances with US hyperscalers and frontier labs to rapidly build capability and scale — accepting significant dependence on American clouds, chips, and models in exchange for accelerated deployment. They move fast, but their sovereignty has a ceiling set by their partners.

Open-Yet-Local countries trade frontier performance for inclusion and social impact. India and Brazil are investing in multilingual, locally relevant AI — India’s Bhashini language platform, Singapore’s MERaLiON to include South-East Asian languages, Brazil’s push for Portuguese-language models — accepting dependence on imported GPUs and foreign base technology while prioritizing linguistic coverage and domestic relevance. They may not lead the model race, but they’re building AI that actually speaks to their populations.

Every country sits somewhere on this map. So does every enterprise.

The question is not whether you depend on external AI infrastructure — you do.

The question is whether you’ve chosen your dependencies deliberately, or inherited them by default.

In the electricity era, the question was: whose plug fits your wall?

In the AI era, the question is: whose model runs inside your institutions?

Where the Parallel Breaks Down (And Where AI Is Worse)

The electricity analogy is instructive but imperfect. And the differences matter as much as the similarities. In several respects, they make the AI challenge fundamentally harder.

Speed. Electrical standardization played out over decades. AI dependency can lock in within months: a single procurement decision, a single API contract, a single cloud migration.

Tangibility. You can see a power grid. You can inspect a plug. AI dependencies are layered, abstracted, and often invisible until something breaks: a licensing change, an export control, a model deprecation.

Governability. Electricity could be nationalized because the physical infrastructure was bounded and territorial. AI models appear weightless, reproducible, and jurisdictionally slippery — but they remain bound to physical infrastructure: data centers, energy sources, undersea cables, and chip supply chains that are very much territorial. Nationalizing AI in the traditional sense is far more complex than nationalizing a power grid. Whether it is desirable, and what "public governance of AI" would even look like, is precisely the question this moment demands we ask.

Pace of obsolescence. An electrical grid, once built, lasts for generations. AI models deprecate in months. The dependency is not just structural but temporal: you are dependent on a provider’s roadmap, not just their current product.

But the most critical difference is this: AI is not a neutral utility.

Electricity powers a light bulb the same way regardless of who manufactured the generator. It does not carry opinions. It does not shape how you think about your history, your laws, or your identity.

AI does.

When a frontier model generates text, summarizes documents, drafts policy, or automates decisions, it does so through the lens of its training data which is overwhelmingly English-language and Western in origin.

A 2024 study published in PNAS Nexus tested five major LLMs and found that their outputs consistently aligned with the cultural values of English-speaking and Protestant European countries. Georgia Tech researchers demonstrated that this bias persists even when models are trained on or prompted in non-Western languages; an Arabic-specific LLM still defaulted to Western cultural references. And as Nature reported, despite advances, “AI models continue to be geared towards the needs of English-speaking people in high-income countries.”

Out of roughly 7,000 languages spoken worldwide, most frontier models meaningfully support fewer than a hundred. The rest, and the cultures, legal traditions, and knowledge systems they carry, are either poorly served or invisible.

The implications go far beyond translation errors.

Cutting off electricity to a household is an act of deprivation. Deploying culturally blind AI across a society is something different: it is an act of substitution. It doesn’t remove capability. It replaces local reasoning with imported defaults and averages. Gradually. Quietly. At scale.

When AI systems draft legal analysis, generate educational content, shape public health recommendations, or automate hiring decisions, they carry embedded assumptions about what is normal, fair, or important. If those assumptions were shaped predominantly by one culture’s data, one language’s internet, and one jurisdiction’s values, then every deployment is also (whether intended or not) an act of cultural overwrite.

This is not a power outage. It is something more insidious: the risk of epistemic dependency at scale (see How AI is rewiring the human brain: the generational transformation of cognition and knowing). Opinion formation shaped by foreign priors. Cognitive patterns gradually homogenized. Local knowledge systems eroded not by censorship, but by irrelevance because the dominant models simply don’t know they exist.

The Ada Lovelace Institute frames this well: “Instead of attributing equal value to different systems of knowledge, mainstream AI platforms amplify pre-existing epistemic injustices.”

If sovereignty means anything in the AI era, it must include epistemic sovereignty; the capacity for a society to reason, decide, and create knowledge on its own terms, not through the borrowed lens of someone else’s training data.

The Adapter Economy Trap

Most of the world is sliding into what I’d call the adapter economy.

For electricity, this meant drawers full of travel adapters and transformers. Functional, but not sovereign.

For AI, it looks like local teams building thin wrappers on top of foreign foundation models. Regulators copy-pasting external AI Acts into very different legal cultures. Enterprises stitching together two or three dominant APIs into dashboards and calling it “AI strategy.”

Adapters let you function. But adapters are not control. They are coping mechanisms for dependence.

The India summit illustrated this tension in real time. The ET forum themed an entire session “Data Sovereignty and the Federated Future.” One panelist on NDTV noted that “by transferring data to data centers in the global south, big tech still has control — it’s not the Government of India or any other entity.”

PM Modi declared: “AI must not reduce human beings to mere data points or raw material. AI must be democratized.” India announced a push from 60,000 to 200,000 GPUs. Over $250 billion in infrastructure commitments. “Design and Develop in India. Deliver to the World.”

The political narrative — “from Green Revolution to AI Revolution,” “AI as infrastructure, not just an app layer” — signals awareness that control over AI stacks will be treated like control over energy or food in earlier eras.

But most of that new capacity is being built through partnerships with the same US hyperscalers that already dominate the stack. Reuters reported billions committed by global tech majors.

You can re-shore data centers and GPUs. But if the models, APIs, and governance frameworks remain foreign-controlled, you may simply be re-intermediating dependency at a different layer.

Sovereign-Ready, Not Sovereign-Alone

The electricity correction worked because governments recognized a simple truth: essential infrastructure requires governance that matches its strategic importance.

For AI, the answer is unlikely to be nationalization in the traditional sense. Very few countries or companies can rebuild the full stack from scratch. And closed systems tend to fall behind open ones.

The more realistic goal is what I’ve been calling sovereign-ready AI: accept interdependence, but design it so that you keep options and bargaining power.

In The Dependency Economy of AI, I argue this means turning unilateral dependency into negotiated interdependence. Concretely:

Multi-cloud, multi-model by design. Avoid single-vendor lock-in for critical systems. Make switching painful but possible.

Jurisdiction-aware architecture. Route data and workloads with the legal layer in mind, not just latency and cost. Law is part of the stack.

Local capability where it counts. Build the ability to fine-tune, evaluate, and audit models - even if you start from global baselines. Don’t outsource all judgment.

A seat at the standards table. Participate in technical and governance standard-setting instead of implementing PDFs written elsewhere.

The India summit’s explicit focus on “who owns AI” and “data sovereignty and the federated future” suggests a shift from model-centric debates to infrastructure and governance. That’s exactly where this conversation needs to go.

Three Questions for Leaders

The electricity story teaches a simple lesson: by the time people seriously ask “who owns this?”, the answer is already buried in standards, contracts, and sunk costs.

With AI, we still have a window.

So instead of asking “who owns AI?” in the abstract, I’d suggest three sharper questions for any board or leadership team:

Whose standards do you have to conform to in order to function? Which provider’s API, governance framework, or evaluation benchmark shapes your operating environment — and what happens if they change the terms?

How many independent options do you have at each critical layer? Chips, cloud, models, data, talent. Count them. If the answer at any layer is “one,” you don’t have a strategy. You have a dependency.

Where are you an adapter — and where are you a genuine co-owner of the grid? Adapters survive. Co-owners shape.

Electricity made many countries dependent on foreign plugs and voltages for a century. AI risks creating similar dependencies over cognition, coordination, and control — only faster, and less visibly.

The difference is that this time, we can see the pattern forming.

The question is whether we’ll wire the system differently.

Thanks for reading!

Damien

This article builds on The Dependency Economy of AI: Sovereignty, Chips, and the World’s Real Chokepoints, published with Asia Tech Lens and the Digital New Deal.

For a practitioner lens on how AI reshapes enterprise value, see What Does AI Really Do to Cash Flows? on Asia Tech Lens.

Damien Kopp is Founder & Managing Director of RebootUp, an AI strategy and transformation advisory that helps organisations compress AI time-to-value and turn AI into measurable economic output. He publishes KoncentriK, a newsletter on Technopolitics — the intersection of AI, geopolitics, and business strategy — and teaches applied AI as Associate Faculty at Singapore Management University. Get in touch: damien.kopp@rebootup.com